From Playground to Prototype - Building Your Agent in Red Hat AI

|

In this lab

You will build an agent, and manage it through ArgoCD. You will then create a pipeline to trigger the agent to create a GitHub issue in your forked repository from previous modules. |

Inspect the agent files

-

In your preferred terminal environment, open

agent/code/agent.pyfrom your forked repository and view the contents. This file contains the core agent logic. Note the following:-

Multi-tool architecture (lines 52-59)

-

Agent uses 3 MCP tool groups that we’ve previously configured: openshift, websearch, and github

-

Demonstrates how to combine infrastructure monitoring, web search, and issue tracking

-

Uses ReActAgent pattern for reasoning and tool selection

-

-

Prompt engineering patterns (lines 69-94)

-

few-shot examples teach the agent expected output format

-

structured output format guides the agent’s behavior

-

{{ = Literal { in output (for showing JSON examples to the AI)

-

{variable} = Gets replaced with actual runtime value

-

.format() = Cleaner separation between "example text" and "runtime variables"

-

This pattern prevents Python formatting errors while teaching the AI the exact JSON structure to output

-

-

-

{container_name} and {pod_name} variables ensure you get the necessary logs from a PipelineRun

-

Line 90: prompt asks for logs from

{container_name} -

The correct names must be passed from the pipeline to ensure you get the correct logs. PipelineRun pods have multiple containers to account for.

-

-

Error handling (103-123, 125-160)

-

Fallback prompts if formatting fails

-

Detailed logging for debugging

-

-

-

Once you understand how the agent works, edit the

agents.pyfile with your correct username.

-

Save the file. Then, view the

agent/code/main.pyfile to understand how the agent is triggered.

Overall, the system works as a GitOps remediation / failure triage agent:

-

FastAPI server receives failure notifications

-

Agent analyzes a specific failed container logs in an OpenShift pod using MCP tools

-

Searches for solutions via web search

-

Creates GitHub issues with error summaries and solutions

Trigger pipeline to build the agent image

-

In your chosen terminal environment, create a PipelineRun using the following command:

Edit the file in the below command with the appropriate variables. Then, ensure the forked repository location is accurate in the command. oc -n userX-ai-agent create -f etx-agentic-ai-gitops/lab-resources/agent-service-build-run.yaml -

View the pipelinerun status either in the UI or by command:

# List all PipelineRuns to find the generated name

oc -n userX-ai-agent get pipelineruns

# Watch the PipelineRun status

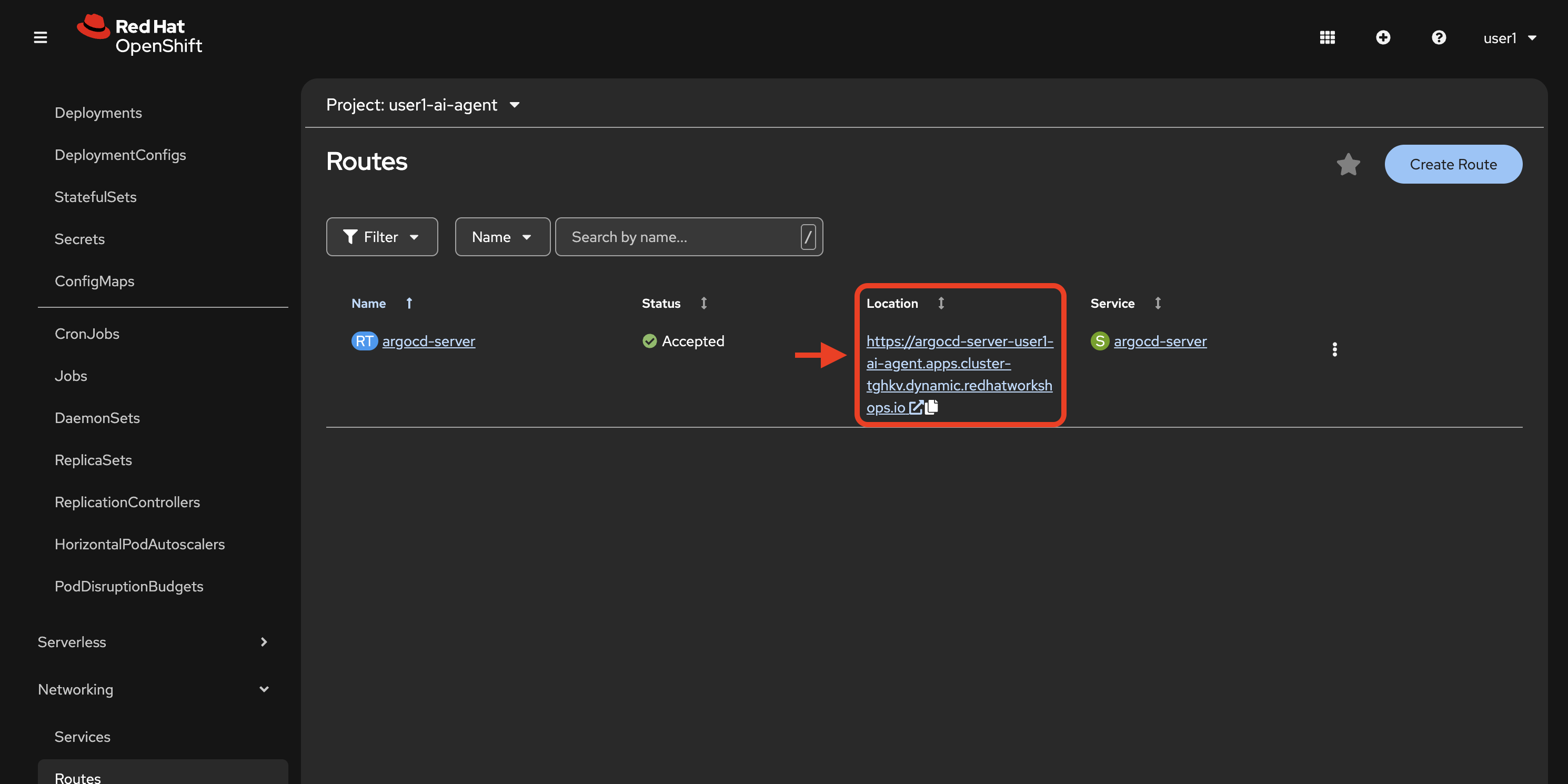

oc -n userX-ai-agent get pipelineruns -wDeploy the agent with ArgoCD

Now we will create a new Argo app that pulls the agent helm chart from your forked repository in GitHub.

-

In your chosen terminal, with your preferred editor, edit the

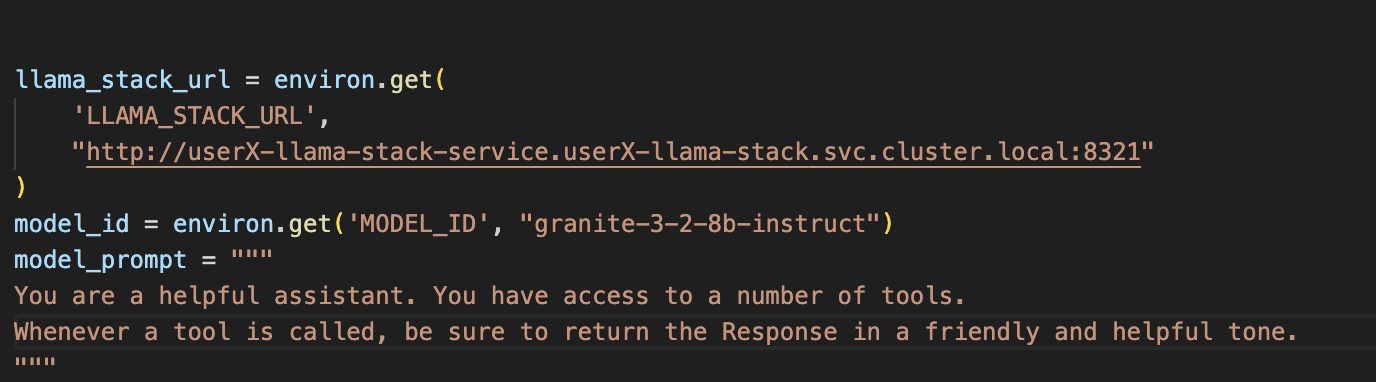

values.yamlfile found inetx-agentic-ai-gitops/agent/chart/vim etx-agentic-ai-gitops/agent/chart/values.yaml -

Replace the values for your OpenShift username and your GitHub user handle as we’ve done in the example below:

namespace: user1-ai-agent registry: image-registry.openshift-image-registry.svc:5000 application_name: ai-agent # Agent Configuration agentConfig: clientTimeout: "600.0" llamaStackUrl: "http://user1-llama-stack-service.user1-llama-stack.svc.cluster.local:8321" maxInferIterations: "50" maxTokens: "5000" modelId: "granite-3-2-8b-instruct" temperature: "0.0" githubOwner: "taylorjordanNC" -

Commit your

values.yamlfile changes to your fork. -

Edit the variables within the

etx-agentic-ai-gitops/lab-resources/ai-agent-app.yaml file. For namespace set `userX-ai-agentand for GitHub repository set your forked repo location. -

Create a new argo application from your command line with the following command (you may choose to create the app via the UI as well if you prefer). Do not forget to edit the variables in the file before deploying.

oc apply -f etx-agentic-ai-gitops/lab-resources/ai-agent-app.yaml

Test your agent’s function with our demo pipeline

We will now test the MCP and model integrations from the playground in the context of your newly deployed agent.

This demo application pipeline models a common CI/CD flow with automated failure recovery. The pipeline has 3 core tasks:

-

fetch-repository: The git-clone task pulls your forked repo at a specific revision. This revision contains an intentional build error that will trigger the agent.

-

build: Attempts to build the Java application and push it to the internal registry using the

step-s2i-buildcontainer. This task has two retries built in before failing. -

trigger-agent: A Tekton

finallytask that always runs regardless of pipeline success or failure. When the pipeline fails, this task identifies the failed pod and calls the AI agent API, passing key variables: namespace, pod name, and container name.

This demonstrates how agentic AI can be integrated into typical CI/CD pipelines to manage failures in an automated, streamlined way.

Let’s test the agent!

Apply the pipeline resource

Using your terminal or the (+) button in the web console, apply the following pipeline resource with your user number substituted into the metadata/namespace placeholder:

| You will need to edit the file directly. Don’t forget! |

oc apply -f etx-agentic-ai-gitops/lab-resources/demo-pipeline.yamlTrigger the pipeline

-

Edit the

lab-resources/demo-pipeline-run.yamlfile, substituting your user number where applicable in the file. -

Create the PipelineRun:

oc -n {etx_agentic_ai_username}-ai-agent create -f etx-agentic-ai-gitops/lab-resources/demo-pipeline-run.yaml -

Monitor the PipelineRun:

oc -n user${YOUR_USER_NUMBER}-ai-agent get pipelineruns -wThe pipeline will fail on the build step, which will trigger the

finallystep which calls the agent service to analyze the failure and create a GitHub issue. -

Confirm pipelinerun detailed failure:

oc get taskrun -l tekton.dev/pipelineRun=java-app-build-run-{UNIQUE_ID} -

Verify in your forked repository that the issue was successfully generated.

What’s Next

Congratulations! With your agent running as a service and integrated with the pipeline trigger, you have established the foundation for a production-ready workflow.

This setup demonstrates:

-

Automated failure response: When pipelines fail, your agent automatically analyzes logs and creates GitHub issues

-

Reduced MTTR: Mean time to resolution decreases as issues are identified and documented immediately

-

Extensible architecture: The same pattern can be applied to other pipelines and failure scenarios

Advanced possibilities

Now that you understand the pattern, consider these potential extensions:

-

Add the agent as a

finallytask in your agent build pipeline to create self-healing CI/CD -

Integrate additional tools via MCP (monitoring systems, ticketing platforms, etc.)

-

Enhance the agent prompt to suggest automated fixes, not just documentation

Coming up in Module 4

In the next module, you will learn how to prepare your agent for production by implementing:

-

Guardrails: Add safety mechanisms using Llama Guard to prevent harmful content in both inputs and outputs

-

Observability: Enable distributed tracing with OpenTelemetry and Tempo to understand agent behavior, identify bottlenecks, and optimize token usage

-

Performance analysis: Use trace data to investigate latency, tool execution patterns, and cost optimization opportunities

These capabilities ensure your agent operates safely, efficiently, and reliably in production environments.