OpenShift Virtualization

Duration: 35 minutes

The Scenario

Some workloads can’t be containerized yet - databases, middleware, Windows services. OpenShift Virtualization lets you run those VMs alongside containers on the same platform using KubeVirt (CNCF project, built on RHEL KVM). Same scheduler, same networking, same monitoring, same RBAC, same oc commands.

In this module you’ll create a VM from the console, see how it’s really just a pod underneath, live migrate it between nodes, clone it, and take snapshots - all with the same tools you use for containers.

Create & Explore a VM

Create a VM

First, create a project for this module:

oc new-project vm-demoNow create a VM using the console - the same cloud-style experience your teams get in AWS or Azure.

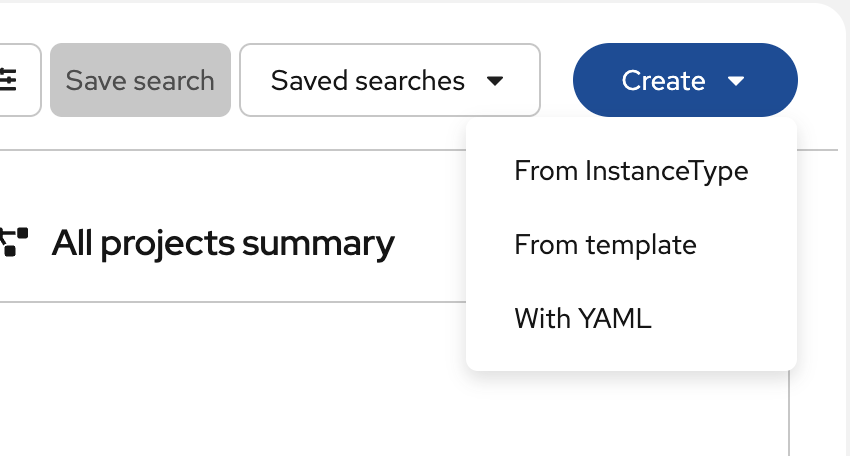

Step 1: Open the Create Wizard

-

In the OpenShift console, switch to the Fleet Virtualization perspective from the top-left dropdown

-

Click the Create dropdown and select From InstanceType

-

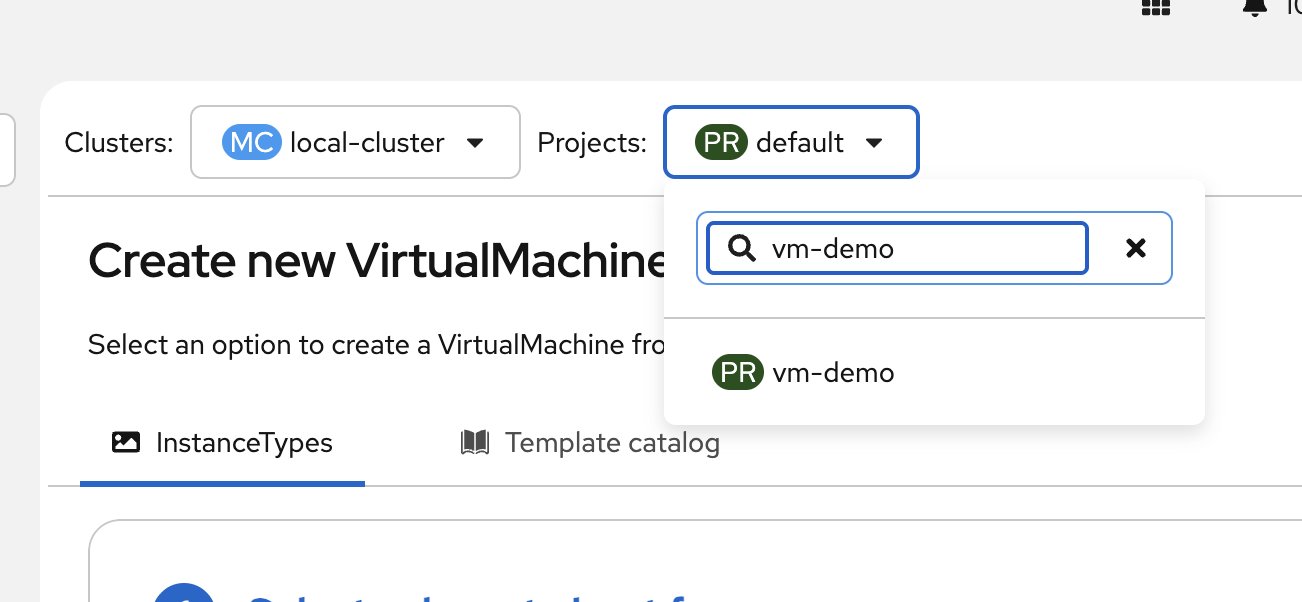

Verify the Project dropdown at the top shows

vm-demo- if not, search for it and select it now

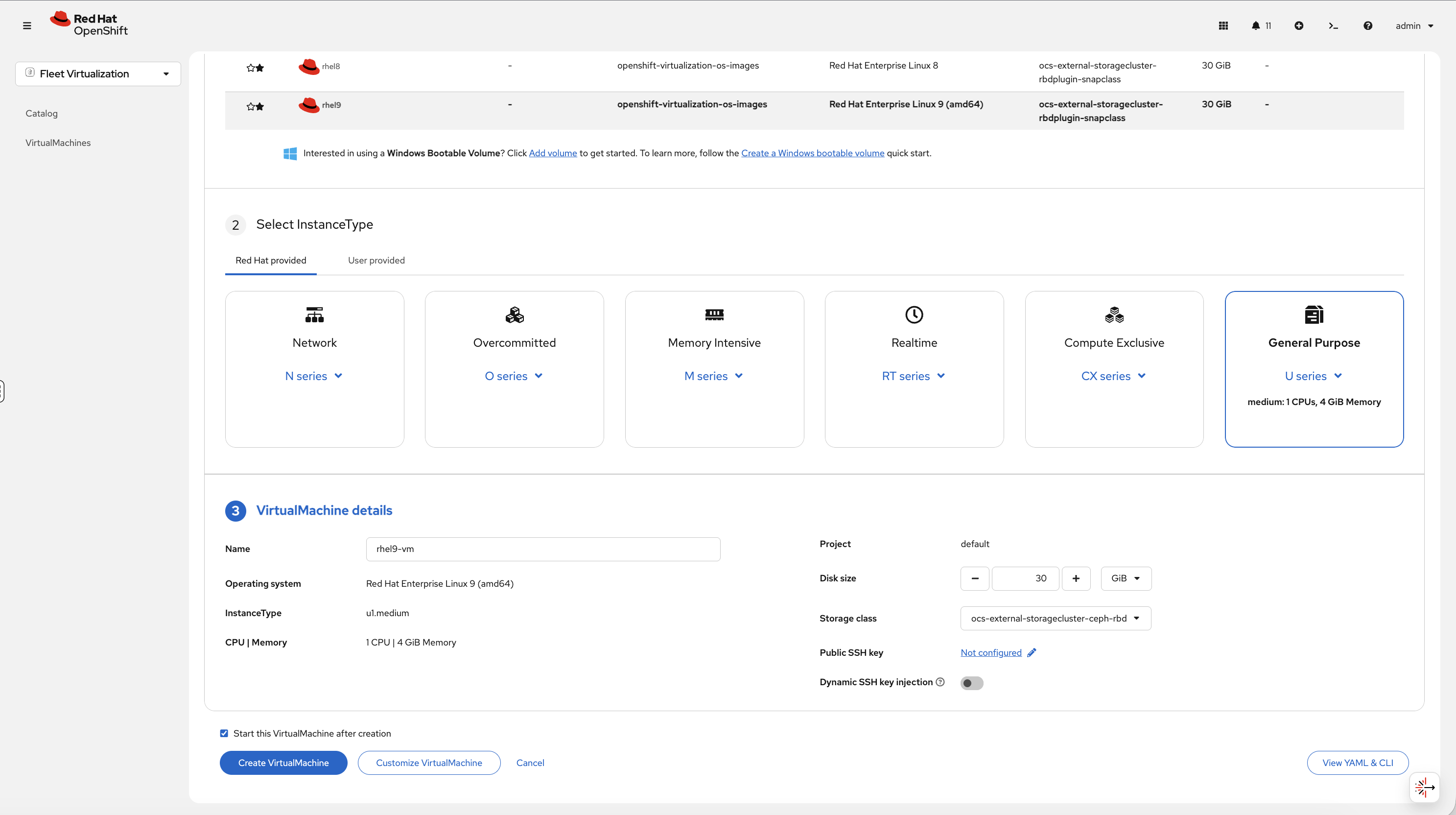

Step 2: Select Boot Source and InstanceType

-

At the top of the wizard, select the Red Hat Enterprise Linux 9 boot source from the volume list

-

Under Select InstanceType, choose Red Hat provided → General Purpose → medium: 1 CPUs, 4 GiB Memory

OpenShift Virtualization provides InstanceTypes - predefined compute profiles similar to cloud instance families:

| Series | Use case |

|---|---|

U (Universal) |

General-purpose workloads |

O (Overcommitted) |

Dev/test with CPU overcommit |

CX (Compute) |

CPU-intensive workloads |

M (Memory) |

Memory-intensive (databases, caching) |

N (Network) |

Network-intensive workloads |

Step 3: Configure and Create

In the VirtualMachine details section:

-

Change the Name to

rhel9-vm -

Leave defaults: 30 GiB disk, default storage class

-

Leave Start this VirtualMachine after creation checked

-

Click Create VirtualMachine

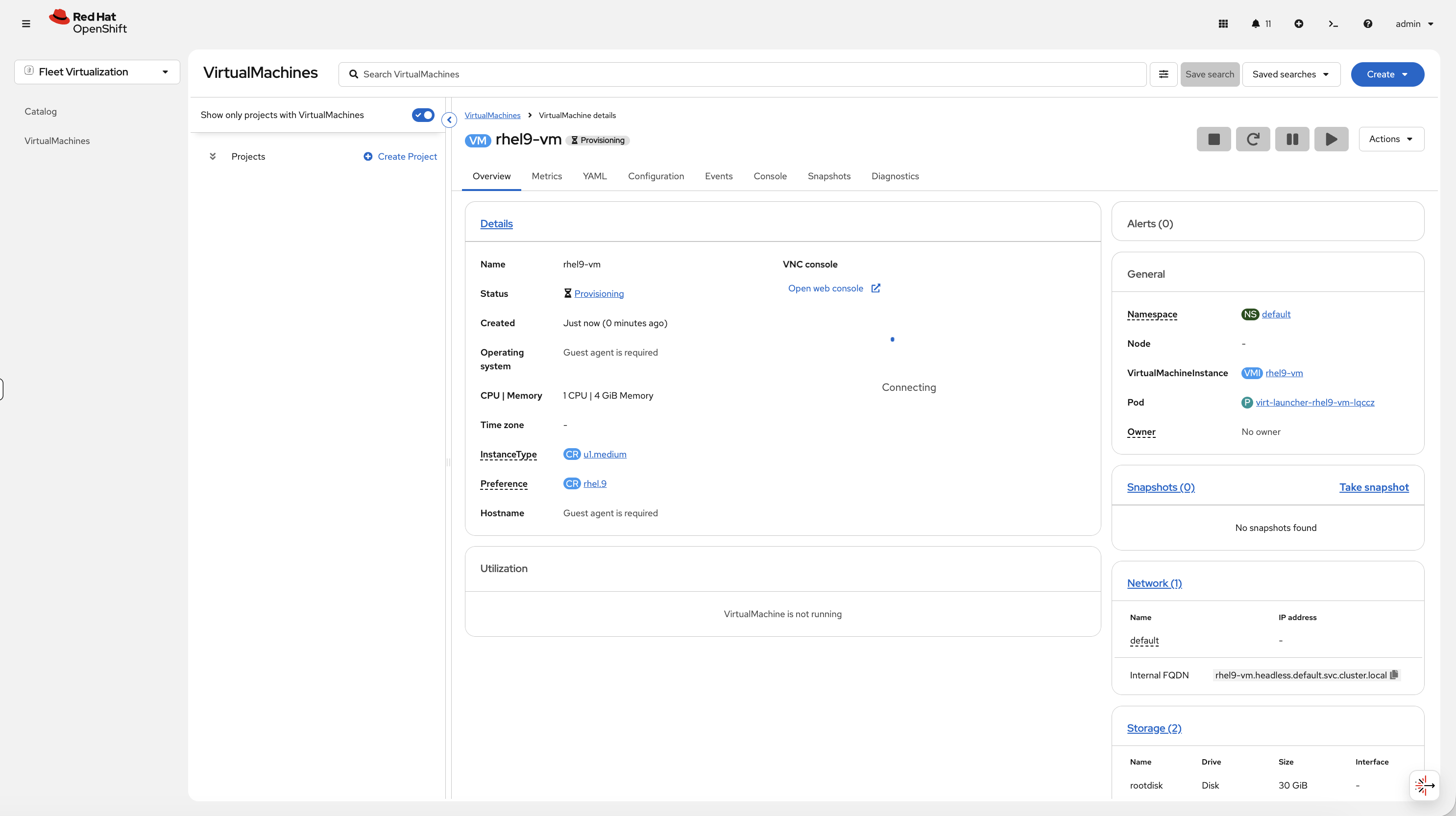

The VM details page opens. You’ll see the status change from Provisioning to Running as the VM boots:

Step 4: Access the VM Console

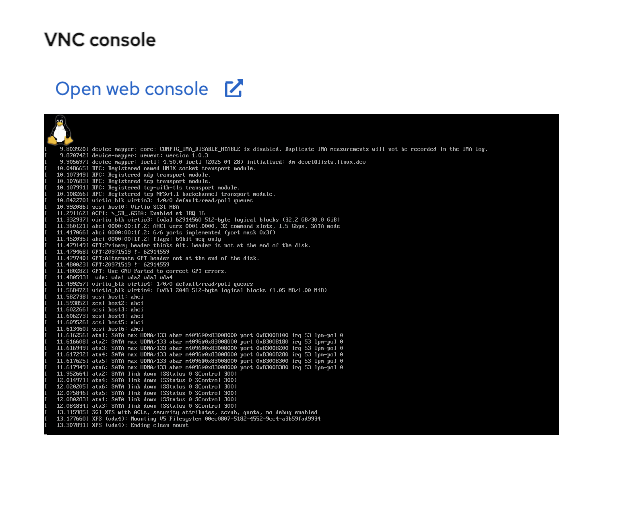

Once the VM is running, click the Console tab. You’ll see the VNC console with the VM’s boot output:

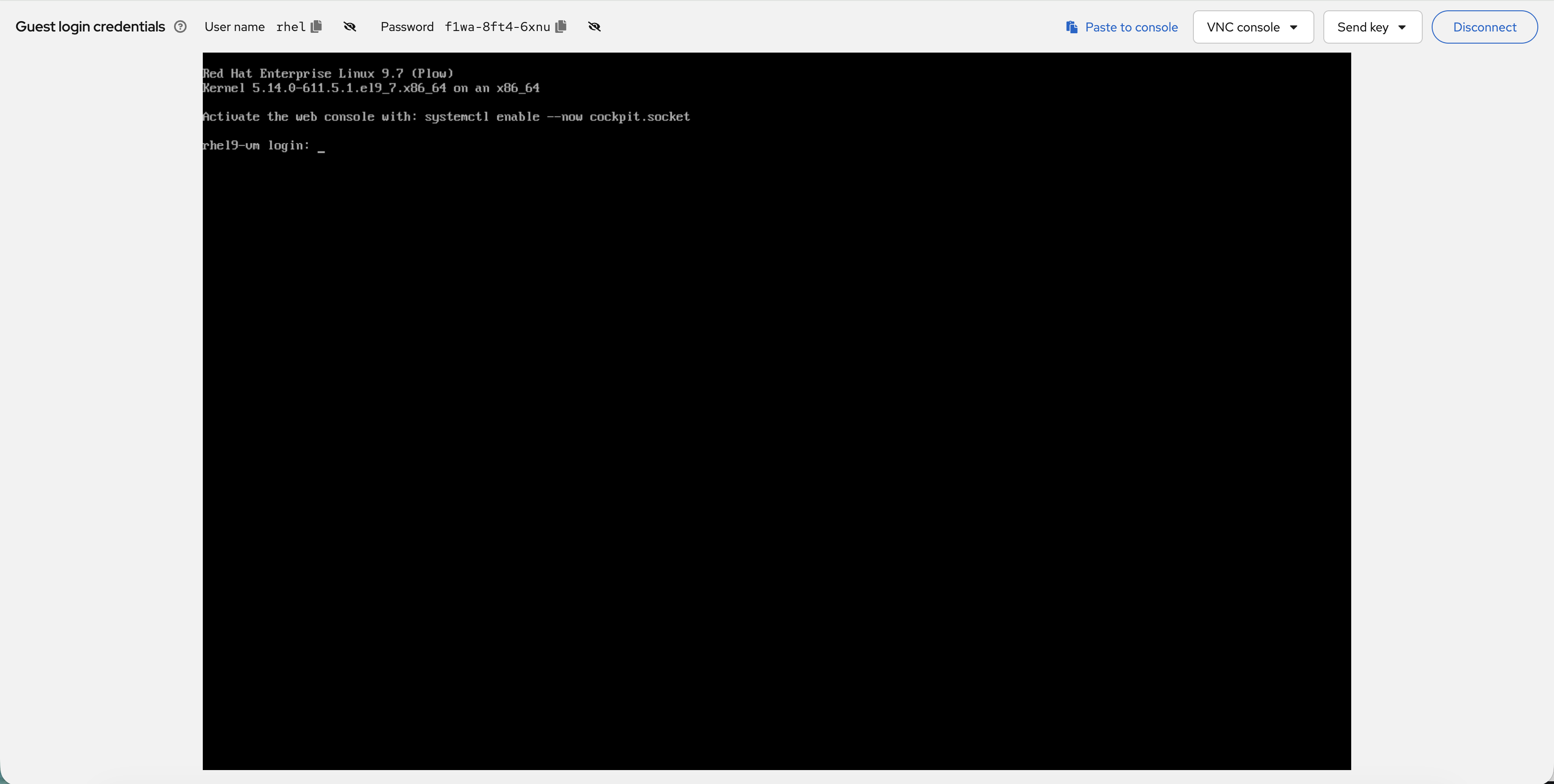

Click Open web console to open the VM console in a new window:

The Guest login credentials are displayed at the top of the console - use these to log in:

Pick a size, pick a boot image, deploy. No YAML needed.

It’s Just a Pod

This is the key insight for operations teams. Your VM runs inside a virt-launcher pod - which means everything you already know about managing pods applies to VMs. Same scheduler, same RBAC, same resource quotas, same oc commands. You don’t need separate tools, separate teams, or separate runbooks for VMs vs containers:

oc get pods -n vm-demoYou’ll see:

NAME READY STATUS RESTARTS AGE virt-launcher-rhel9-vm-xxxxx 2/2 Running 0 3m

That’s your VM. It’s a pod. The same oc commands work:

oc describe pod -l vm.kubevirt.io/name=rhel9-vm -n vm-demo | tail -10Monitoring & Networking

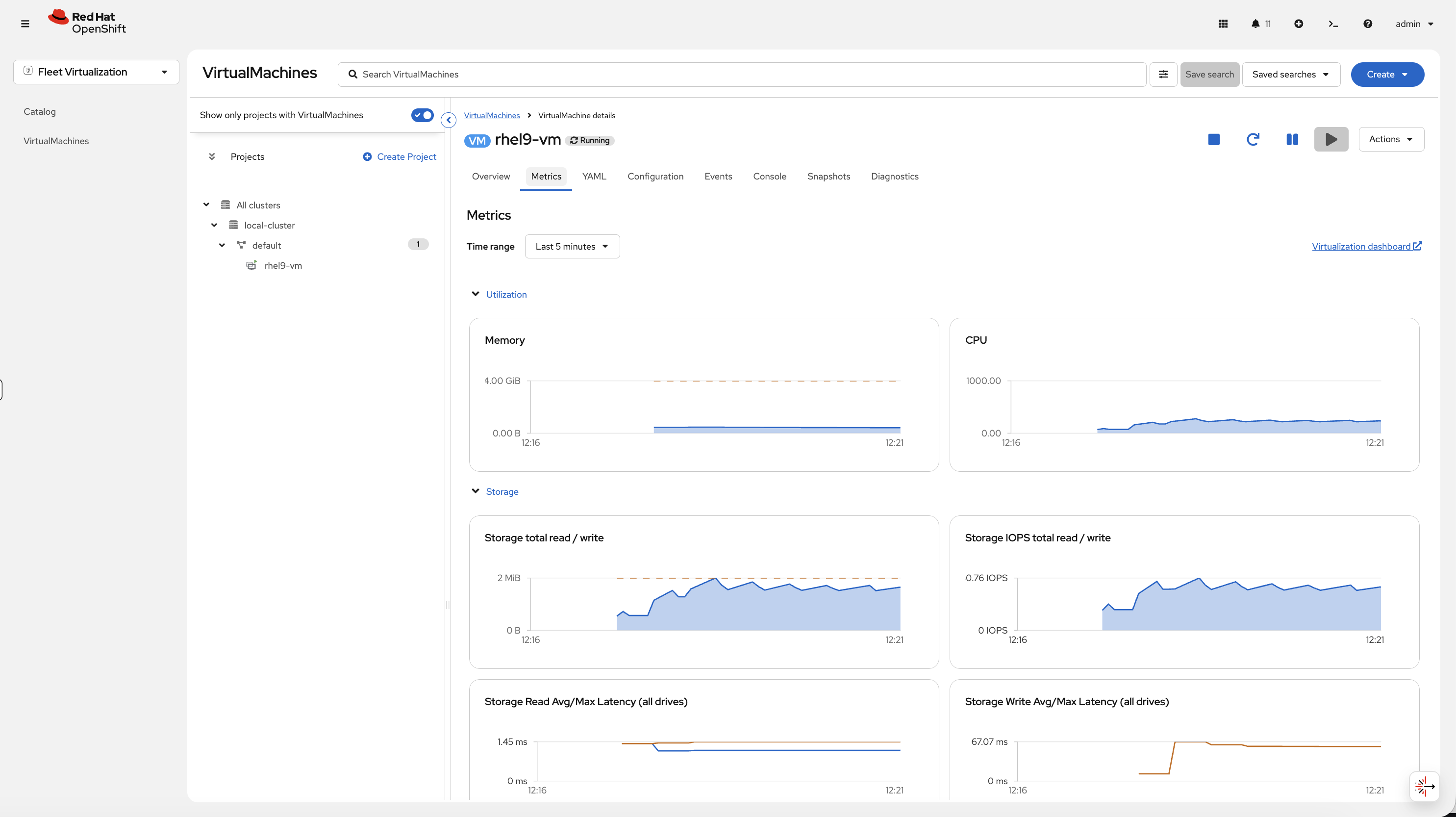

Unified Monitoring

VM metrics flow into the same Prometheus stack as your containers - one dashboard for everything. No need for a separate monitoring tool for your VMs.

On the VM details page, click the Metrics tab to see:

-

Memory usage over time

-

CPU usage over time

-

Storage total read/write

-

Storage IOPS

-

Storage latency

Or query from CLI - the VM uses resources just like any pod:

oc adm top pods -n vm-demoExpose the VM (Same Networking as Containers)

VMs get pod IPs on the same SDN as containers - same Services, same Routes, same NetworkPolicies. One networking model for VMs and containers:

oc get vmi rhel9-vm -n vm-demo -o jsonpath='{.status.interfaces[0].ipAddress}' && echo ""Create a Service pointing to the VM:

oc apply -f - <<EOF

apiVersion: v1

kind: Service

metadata:

name: rhel9-vm-ssh

namespace: vm-demo

spec:

selector:

vm.kubevirt.io/name: rhel9-vm

ports:

- port: 22

targetPort: 22

EOFoc get svc rhel9-vm-ssh -n vm-demoThe VM is now reachable via the service - the same way you’d expose a container. Same label selectors, same service types, same networking model.

For VMs running web applications (Apache, IIS, Tomcat), you’d create a Service on port 80/443 and a Route with TLS edge termination - the same workflow you use for containers. When migrating multi-tier applications, move the VMs with MTV, wire them up with Services and Routes, and they’re live on OpenShift without changing the application.

VM Lifecycle

Live Migration

This is the feature that makes OpenShift Virtualization production-ready. When you need to patch a node, upgrade the OS, or replace hardware, you drain the node. Containers reschedule automatically, and VMs live migrate automatically too. oc adm drain handles both. Zero downtime during maintenance windows.

Step 1: Prepare for Migration

Live migration requires compatible CPU features on the source and target nodes. Workshop clusters can have mixed Intel and AMD nodes in the pool - VMs can only migrate between nodes with the same CPU vendor because the CPU instruction sets are incompatible. This script detects your VM’s CPU vendor and pins it to the vendor with 2+ nodes so there’s a valid migration target:

bash <(curl -sL https://raw.githubusercontent.com/rhpds/openshift-days-ops-showroom/main/support/11-virtualization/prepare-migration.sh)

This may take a minute if the VM needs to be moved to a different node. Wait for the Ready - Node: output before continuing.

|

| In production, you’d ensure homogeneous CPU hardware in your cluster or use a specific CPU model in the VM’s spec. This is a common operational consideration for any KubeVirt/OpenShift Virtualization deployment. |

Step 2: Note the Current Node

Check which node the VM is on before migration:

oc get vmi rhel9-vm -n vm-demo -o jsonpath='Node: {.status.nodeName}' && echo ""Step 3: Trigger the Migration

-

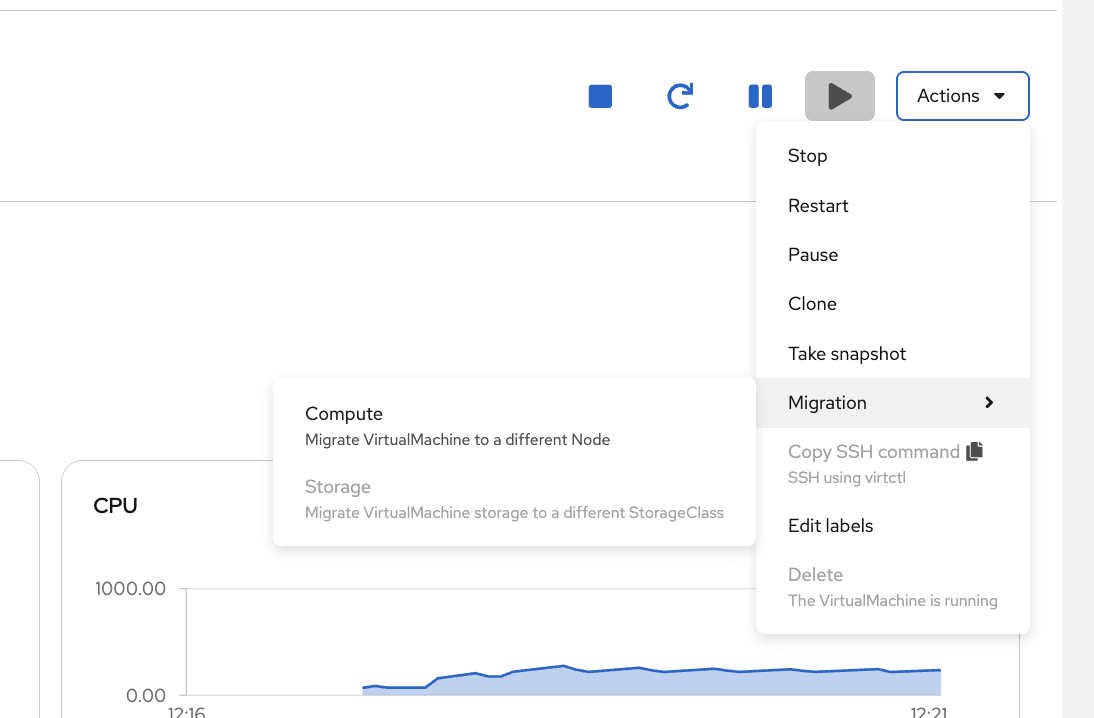

On the VM details page, click the Actions dropdown in the top-right corner

-

Hover over Migration to expand the submenu

-

Select Compute - this migrates the VM’s running state (CPU/memory) to a different node

-

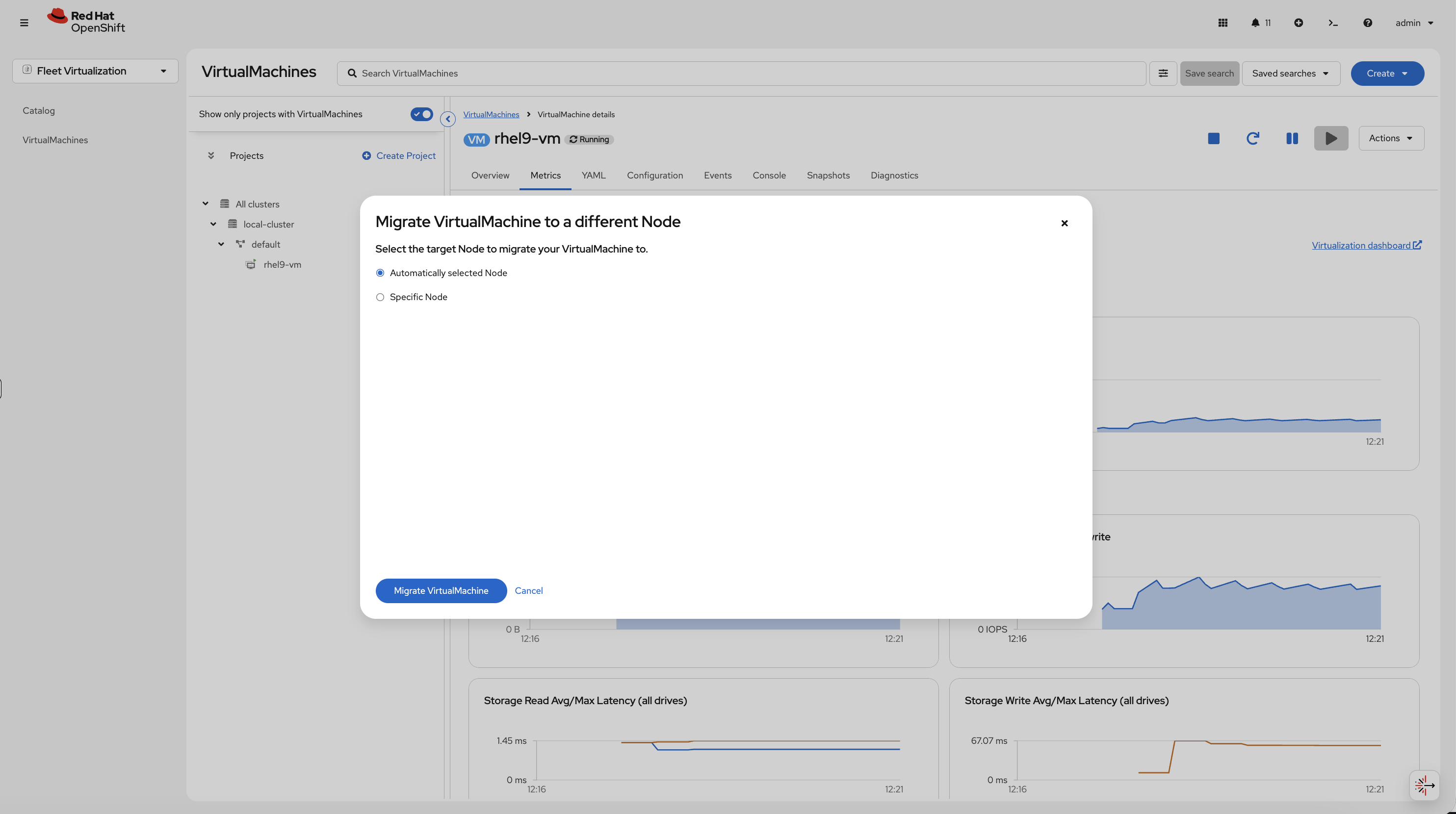

In the migration dialog, leave Automatically selected Node selected and click Migrate VirtualMachine

Step 4: Watch the Migration

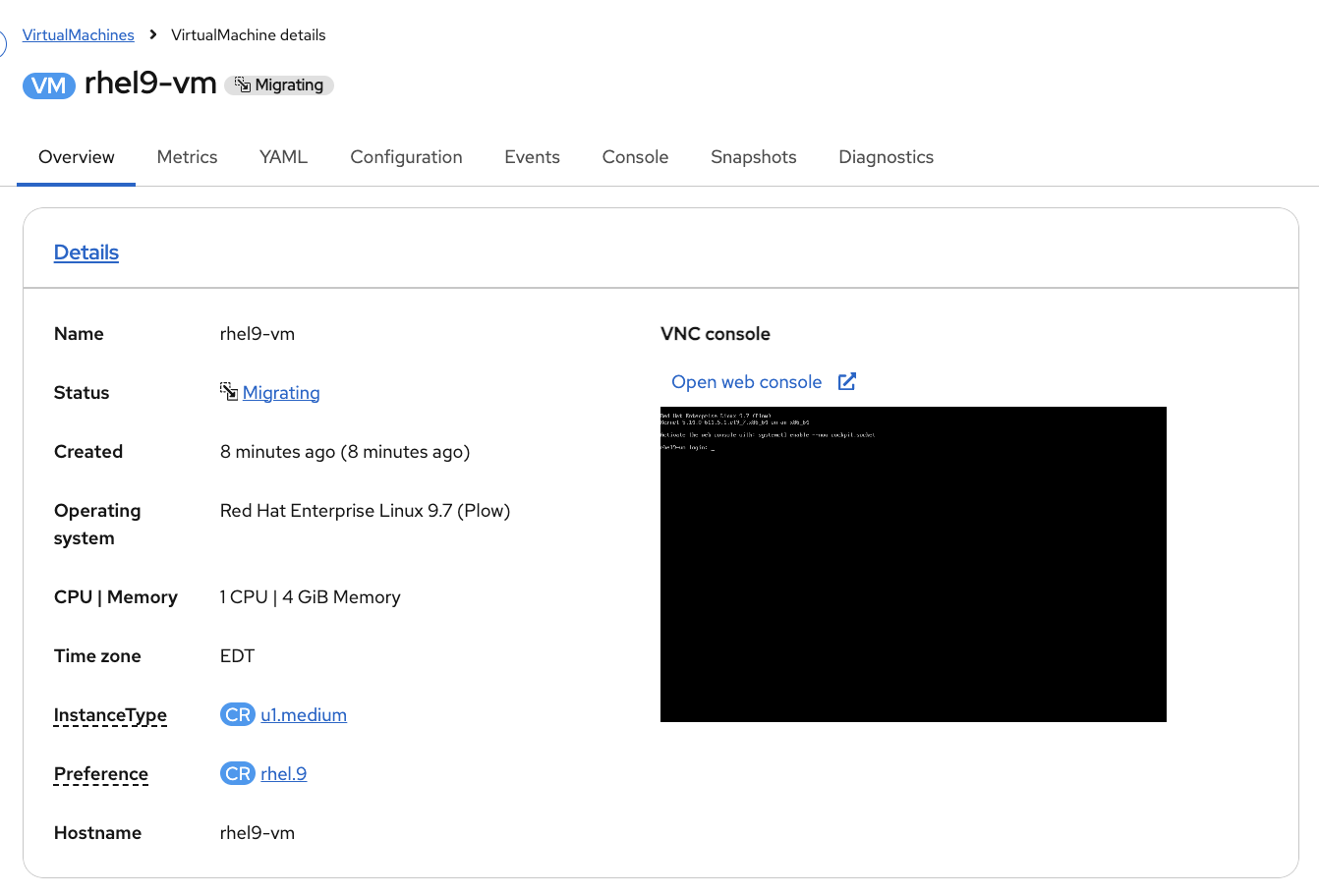

The VM status changes to Migrating while the live migration is in progress:

The migration completes quickly - typically within 30 seconds. Once the status returns to Running, verify the node changed:

oc get vmi rhel9-vm -n vm-demo -o jsonpath='Node: {.status.nodeName}' && echo ""The VM moved while still running. No downtime, no reconnection needed.

Clone a VM

Need another copy of a VM - for testing, dev environments, or scaling out? Clone it. This creates a full copy of the VM’s disk and configuration.

Step 1: Start the Clone

-

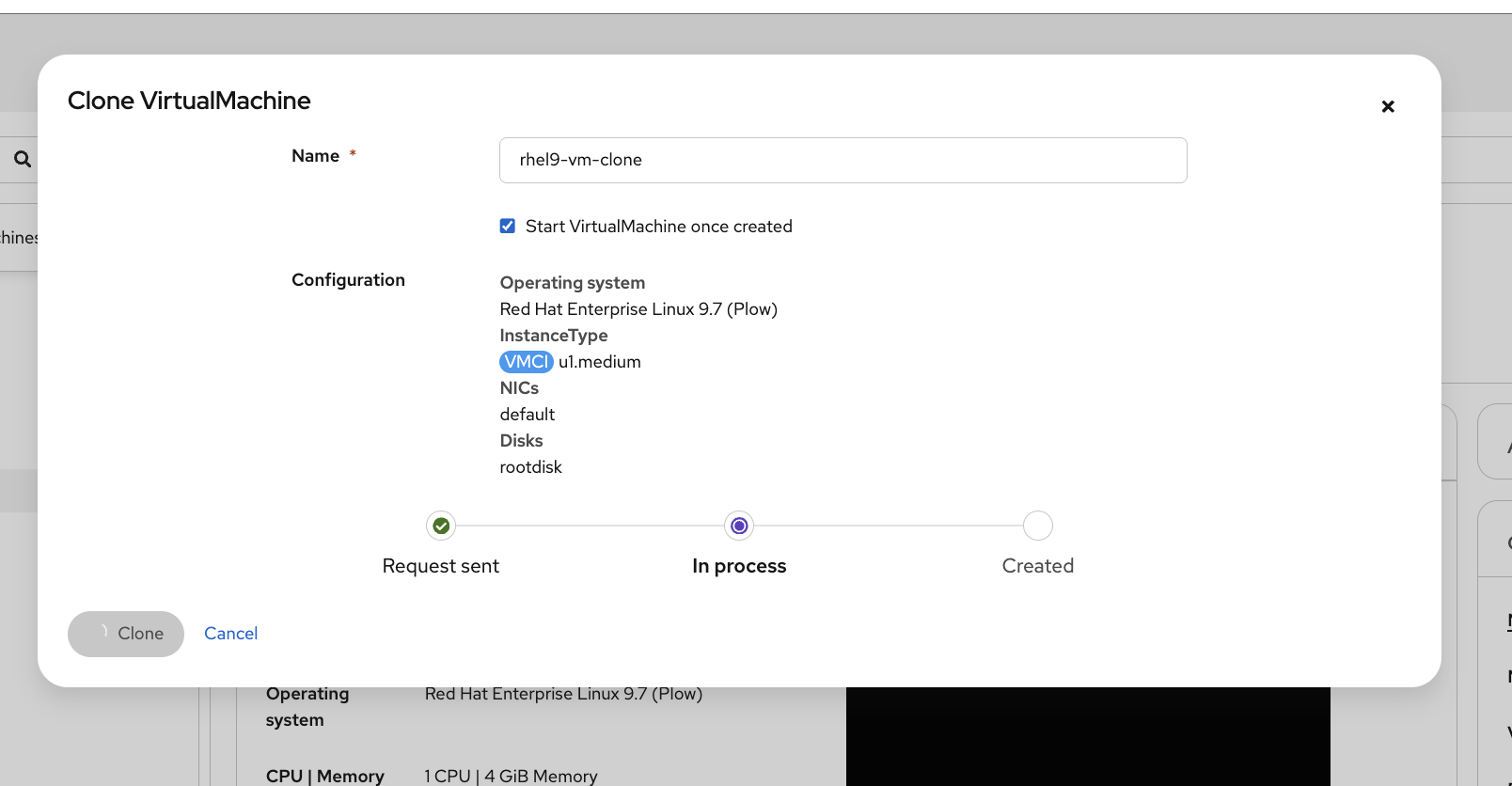

On the rhel9-vm details page, click Actions → Clone

-

Change the name to

rhel9-vm-clone -

Check the Start VirtualMachine once created checkbox

-

Click Clone

Step 2: Watch the Clone Progress

The dialog shows the clone progress: Request sent → In process → Created:

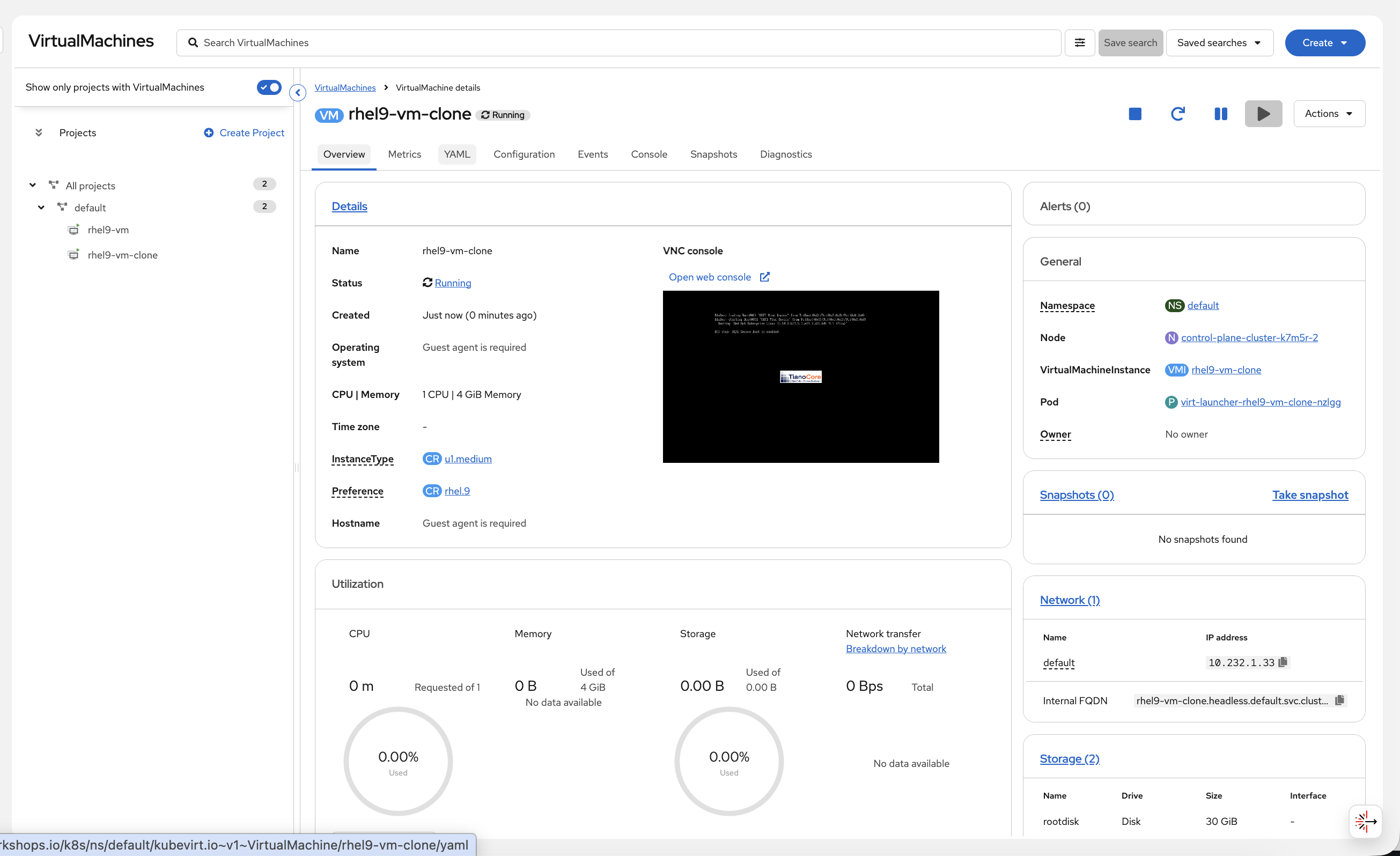

Once complete, the console navigates to the clone’s details page. You’ll see both VMs in the left sidebar:

The clone has its own independent disk - changes to one don’t affect the other. This is how you’d create golden images: configure a VM exactly how you want it, then clone it for each environment.

| Click back to rhel9-vm in the left sidebar before continuing. The next steps (snapshot and restore) should be done on the original VM, not the clone. |

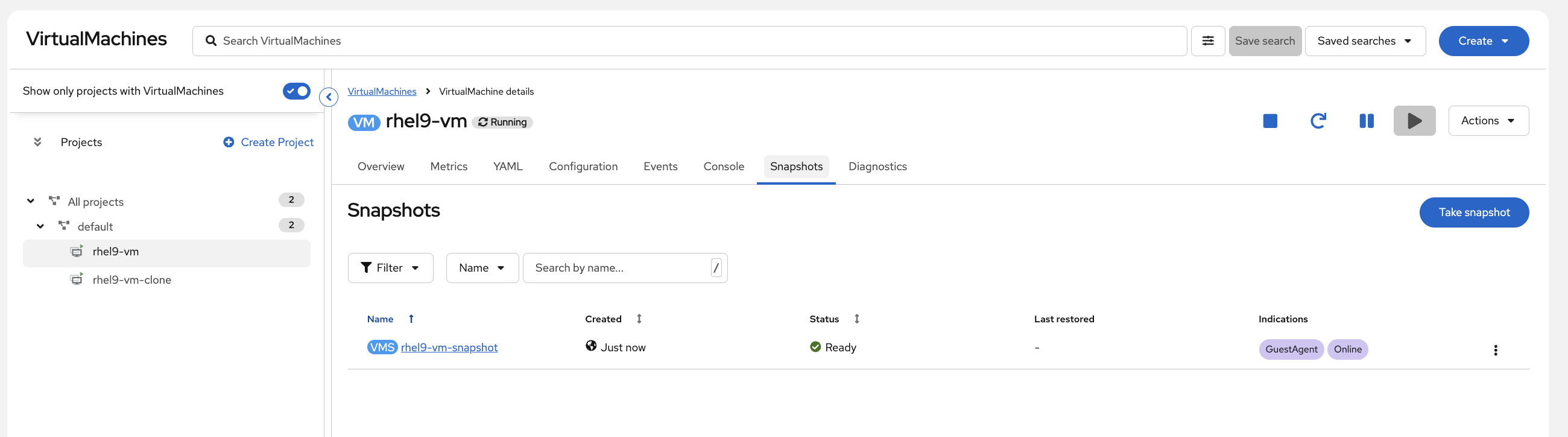

Snapshots

Every operations team has been burned by a patch that broke something. Snapshots give you a safety net - capture the VM’s disk and configuration before a change, and roll back in seconds if it goes wrong.

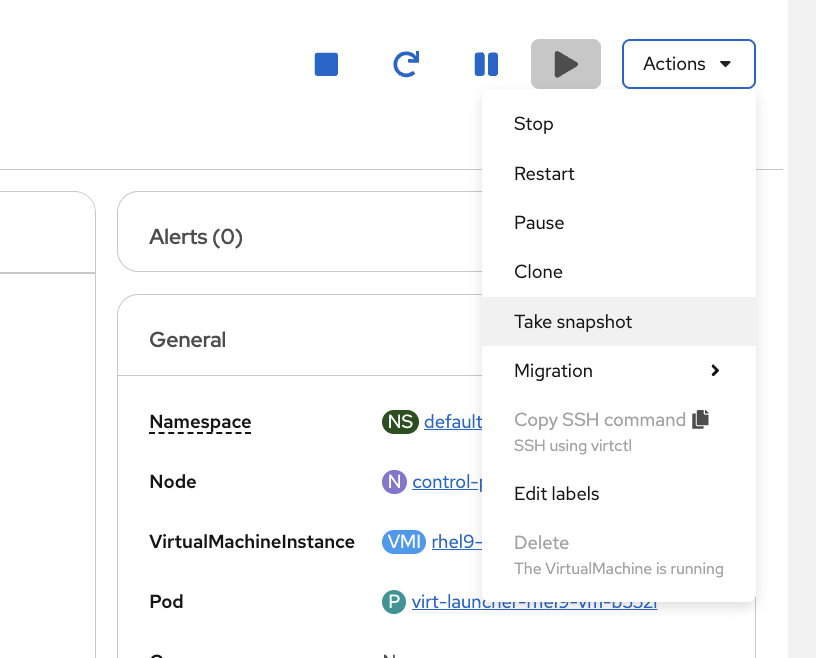

Step 1: Take a Snapshot

-

Navigate to rhel9-vm (not the clone) and open its details page. Click Actions → Take snapshot

-

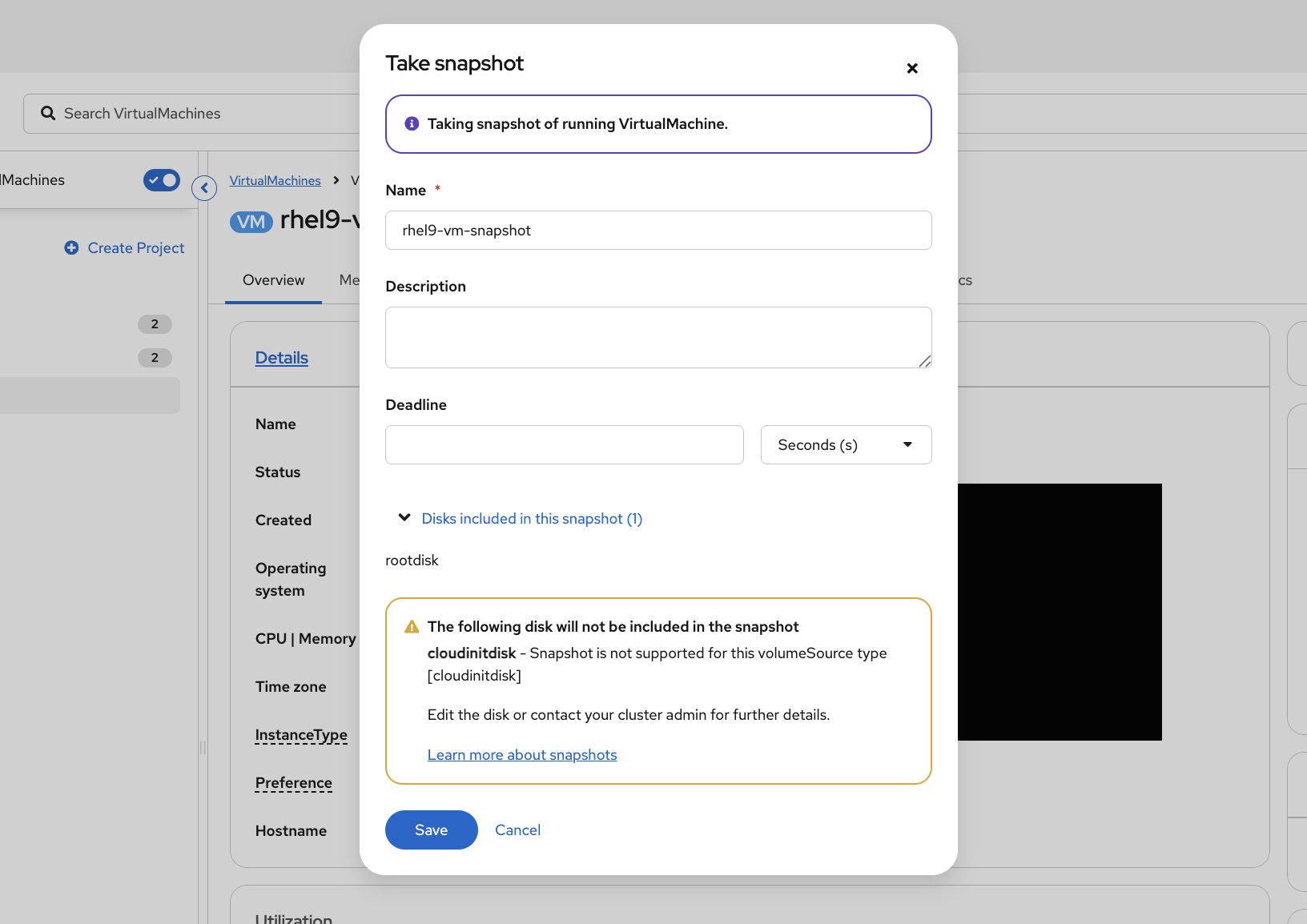

Leave the default name (

rhel9-vm-snapshot) and click Save

The warning about cloudinitdisk not being included is expected - cloud-init only runs on first boot and doesn’t need to be captured.

|

Step 3: Break Something

The snapshot is your safety net - let’s prove it works by breaking the VM. First, get the VM login credentials:

DATA=$(oc get vm rhel9-vm -n vm-demo -o jsonpath='{.spec.template.spec.volumes[?(@.name=="cloudinitdisk")].cloudInitNoCloud.userData}')

echo "Username: $(echo "$DATA" | grep 'user:' | awk '{print $2}')"

echo "Password: $(echo "$DATA" | grep 'password:' | awk '{print $2}')"Now connect to the VM console:

virtctl console rhel9-vm -n vm-demoLog in with the credentials above, then run these commands inside the VM to make changes we can verify after restore:

touch /home/rhel/important-data.txt cat /etc/hostname sudo hostnamectl set-hostname broken-vm cat /etc/hostname

You should see the hostname change from rhel9-vm to broken-vm. When done, click the reload button on the Terminal tab to exit the console and return to your normal terminal.

Now the VM has changed - a new file exists and the hostname has been modified. Let’s restore to the snapshot and prove both changes are reverted.

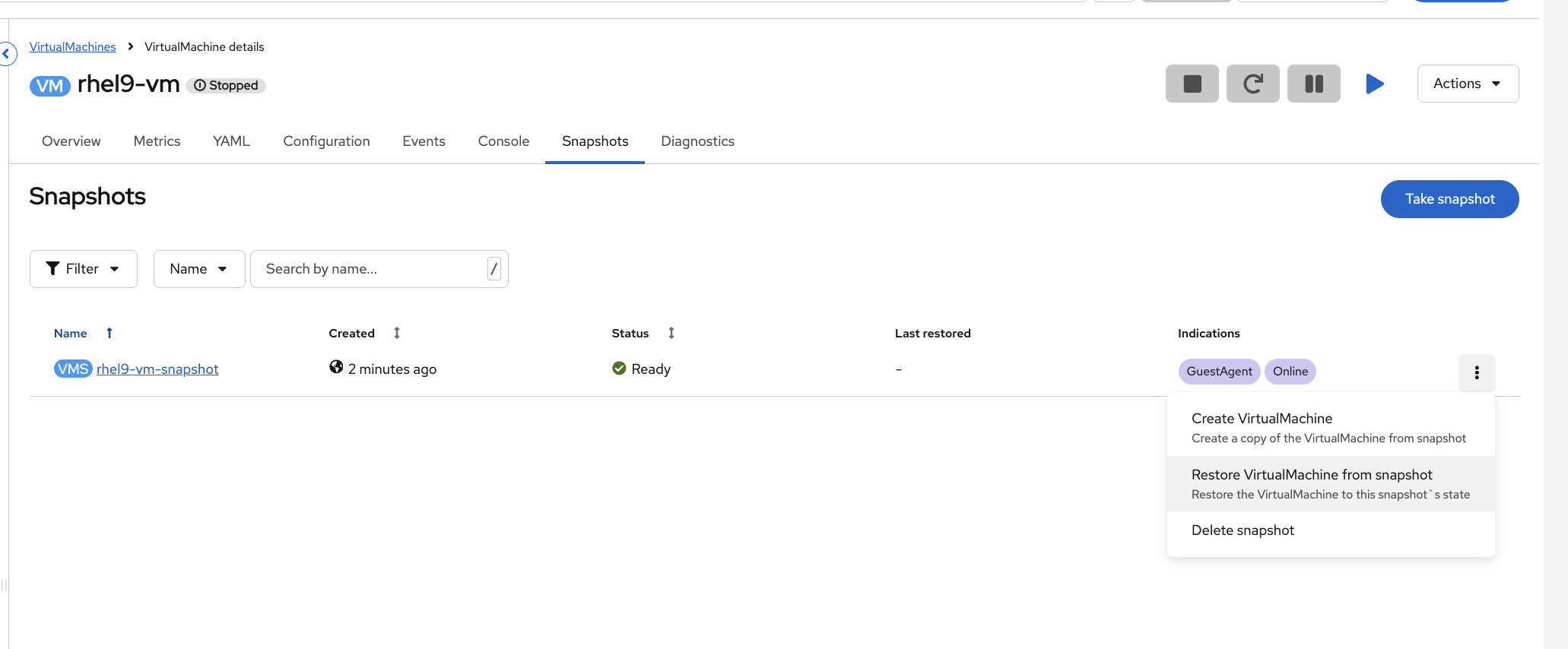

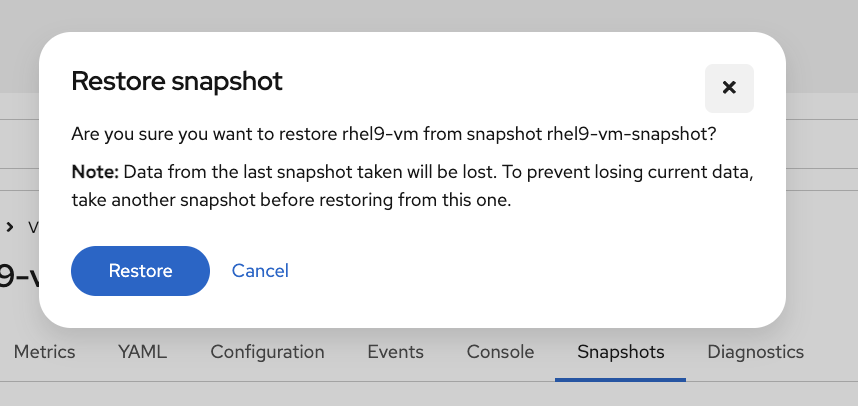

Step 4: Restore from Snapshot

-

Stop the VM - click the Stop button (square icon) in the top-right action buttons

-

Once the VM shows Stopped, go to the Snapshots tab, click the kebab menu (three dots) next to the snapshot, and select Restore VirtualMachine from snapshot

-

Click Restore to confirm

-

Start the VM again by clicking the Start button (play icon)

Once the VM is running, get the login credentials again:

DATA=$(oc get vm rhel9-vm -n vm-demo -o jsonpath='{.spec.template.spec.volumes[?(@.name=="cloudinitdisk")].cloudInitNoCloud.userData}')

echo "Username: $(echo "$DATA" | grep 'user:' | awk '{print $2}')"

echo "Password: $(echo "$DATA" | grep 'password:' | awk '{print $2}')"Connect to the VM console:

virtctl console rhel9-vm -n vm-demo

You may see boot output (kernel messages, systemd services) scrolling. Wait for the rhel9-vm login: prompt to appear, then press Enter if needed.

|

Log in with the credentials above, then verify the restore worked:

ls /home/rhel/important-data.txt cat /etc/hostname

ls should show No such file or directory - the file you created is gone. cat /etc/hostname should show rhel9-vm (the original hostname), not broken-vm - the hostname change was reverted. The disk was restored to the exact state when you took the snapshot. This is how you roll back a failed OS patch or a broken config change without rebuilding from scratch.

Click the reload button on the Terminal tab to exit the console.

Cleanup & Summary

Summary

| Feature | What it means for ops |

|---|---|

VMs are pods |

Same |

InstanceTypes |

Cloud-style provisioning - pick a size, pick an image, deploy |

Same networking |

Services and Routes work for VMs and containers |

Same monitoring |

Prometheus dashboards show VM and container metrics together |

Live migration |

Drain nodes for maintenance without VM downtime |

Cloning |

Rapid provisioning from golden images for dev/test/staging |

Snapshots |

Point-in-time rollback before patches or upgrades |

The value: Run VMs and containers side-by-side on one platform. One set of tools, one set of skills. Migrate existing VMs with MTV when ready, or run them indefinitely - OpenShift supports both. Windows Server is SVVP-certified, and RHEL/Fedora/CentOS boot from pre-cached images in seconds.

Cleanup

Deleting VM disks and namespaces takes a couple of minutes. This runs in the background so you can move on to the next module immediately.

(

virtctl stop rhel9-vm -n vm-demo 2>/dev/null || true

virtctl stop rhel9-vm-clone -n vm-demo 2>/dev/null || true

sleep 10

oc delete namespace vm-demo --ignore-not-found --wait=false

) &>/dev/null &

echo "Cleanup running in background - you can continue to the next module"Additional Resources

-

OpenShift Virtualization: Virtualization documentation

-

virtctl Reference: virtctl commands

-

Live Migration: Live migration documentation

-

VM Snapshots: Backup and restore documentation